Category

Points to ponder while writing automation

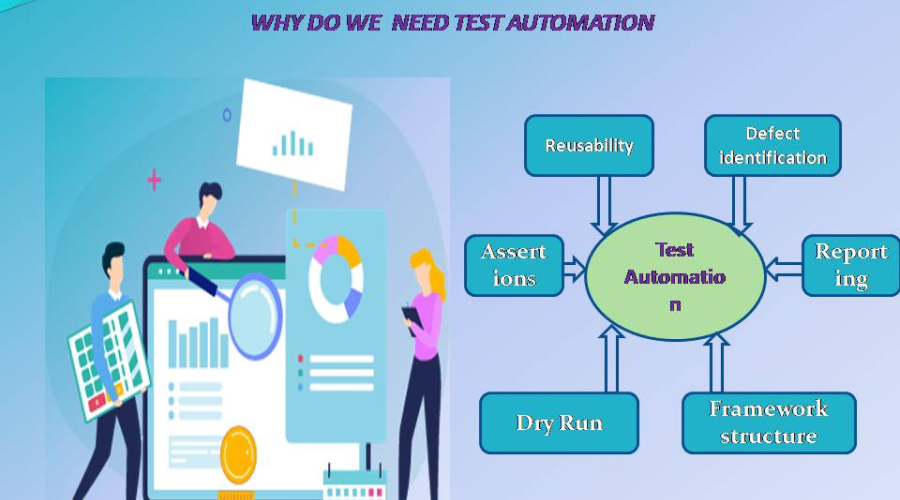

Why do we do automation testing?

Is it because it’s time-effective? If you consider the initial cost involved in automation testing set–up, it would be cost-effective to hire more manual testers and complete the regression testing. Then, why do we go for automation? It’s to fail faster. It’s to find the defects, faster than a human could. It’s to avoid manual intervention. It’s to load tests effectively and try to break the system faster. Why should we fail fast? To mend the system faster, we have to break the system faster. That’s the main reason why we go for automation. In order for our automation scripts to find defects, what are the points to ponder? Let’s see it below.

Framework Structure

The framework is a set of rules and re-usable functions, which makes the life of the automation tester easier. The reason why it is important to be ready with the framework is that we have timelines. And also, deciding the framework becomes the part of our planning process. There are two types of frameworks, that can be used in any project. The first is reusing the available open-source frameworks or developing a custom framework for projects. Both have their own advantages and disadvantages. Automation scripting is better with a framework than without it.

Reusability

When we have a good framework, we also can demand good scripting practices. But, it also depends on the automation experts in the team. There will always be a stark difference between an experienced professional and an entry-level trainee. If we have an expert programmer in our team, they will write a highly reusable script. And no complaints but a learner can sometimes think that a copy-paste is reusability. Always providing a good amount of training about good coding practices, framework-related documentation, and using tools like Sonarlint can help avoid the repetition of codes.

Defect identification

There is nothing to be proud about, until our automation scripts start finding defects. The main purpose of testing is to find defects and ship a quality product. As said in the previous section, if our scripts could execute faster than manual resources, it’s also that they have to find the defects faster. This can be made possible through adding validations and assertions at right points. This can be derived from good manual test cases.

Assertions

Defect identification is possible through assertions. To create automation scripts that find defects, we have to fill them with a variety of assertions. If we take UI automation, URL and tile of the page assertion, text content of a web element, and text content in the webpage are different assertions that we can use. In case of API automation, validating the status code, schema and headers can give more insight. These are just examples. Every type of test script should have their own set of assertions. As mentioned in the previous section, a good set of manual test cases implementing testing validations like Boundary value analysis, Equivalent partitioning can help creating magic.

Reporting

Reporting is one of the key metrics, which define the quality of our automation scripts. A good reporting can help us find the reason why an automation script passed or failed. Also, deriving from previous points, a good framework allows us to determine, what type of report (word, excel or html) we want and when the script has to take screenshots in case of UI testing. Irrespective of the different types of report that we get from different tools or frameworks, every report must have Test_Case_ID, Test_Steps, Pass or Fail for those steps, machine or system in which execution was done and time of execution.

Dry Run

If we have prepared food, we would taste it at least once before we start serving right?! Same with the automation scripts. What happens if we don’t? There is a 50:50 chance of the dinner faring well or bad. But with automation scripts, there is 20:80 chance or even less of fairing well, since the scripts depend on various factors like execution environment, test data, application available time, system memory. A seemless execution end to end, for at least 5 to 10 times, will ensure the script becoming inevitable part of regression or test data creation.

CICD flawless run

Every organisation or project chooses their own set of tools, frameworks and CICD tools. There are lots of wonderful tools available in the market right now like Jenkins, GitHub, SVN. We have to choose the right CiCD tools, that would satisfy our needs and also be compatible with our framework and tool. There are many factors to consider while enabling CICD for automation scripts. Few of them being, the execution speed supported by the tool and the execution speed in the CICD tool. Sometimes the CICD tool too fast and automation tool becomes too slow or vice versa. So, a seem less run at least 5 to 10 times through CICD tools is a must to make things easier for an automation execution in the future. Many projects, which just did the dry run and integrated their scripts with DevOps has the history of wasting the cost and time of resources in their project, because, they forgot to give a dry run through their CICD tools.

Over Engineering

Have you ever tried making something out of the already available recipe and ruined your dinner? (I know everybody sees YouTube channels for learning cooking instead of asking the experts in our home) Sounds familiar right? Same thing can happen in automation scripting too. Too much of encapsulation, abstraction and various coding techniques can reduce the code readability. This would make things difficult, when re-factoring the code or optimizing it by using other automation experts. Keep the rules simple and make automation a piece of cake by following them but not too much.